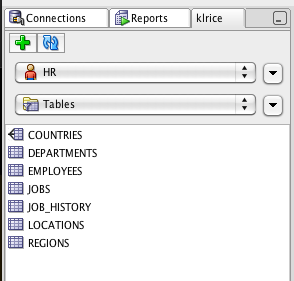

This is the beginning of what I plan on building up over time which is a library of functions for use to make custom script very easily. This is an example of adding the standard who columns to every table in the model.

The first two functions are to delete and add columns to a table.

// import actual java classes for use later

importPackage(javax.swing);

// variable to keep a status message for later

var msg="";

/*

Delete function takes in the table and name of the column to delete

*/

function deleteColumn(table,colName){

columns = table.getElements();

// iterate columns looking for the one to remove

for (var i = 0; i < columns.length; i++) {

if ( columns[i].getName().toUpperCase() == colName ){

columns[i].remove();

msg += "Deleted from "+ table.getName() + " : " + colName + "\n";

}

}

}

/*

checkOrCreate function takes in the table and name of the column to add. This checks for the existence of the column before addin

*/

function checkOrCreate(table,colName,typeName,typeSize){

hasCol = false;

columns = table.getElements();

for (var i = 0; i < columns.length; i++) {

column = columns[i];

if ( column.getName().toUpperCase() == colName ){

hasCol=true;

}

}

// if the column is not present add it

if (! hasCol ) {

newCol = table.createColumn();

newCol.setName(colName);

newCol.setUse(1);

// lookup the logical datatype based on the name

type = model.getDesign().getLogicalDatatypeSet().getLogTypeByName(typeName);

newCol.setLogicalDatatype(type);

if (typeSize != null ) {

newCol.setDataTypeParameter("size",typeSize);

}

msg += "Added to "+ table.getName() + " : " + colName+ "\n";

}

}

// grab all the table in the model as an array

tables = model.getTableSet().toArray();

for (var t = 0; t < tables.length;t++){

// remove all these from the table

deleteColumn(tables[t],"CREATED_BY");

deleteColumn(tables[t],"CREATED_ON");

deleteColumn(tables[t],"UPDATED_BY");

deleteColumn(tables[t],"UPDATED_ON");

// add them back with the specified datatypes

checkOrCreate(tables[t],"CREATED_BY", "VARCHAR",200);

checkOrCreate(tables[t],"CREATED_ON", "DATE");

checkOrCreate(tables[t],"UPDATED_BY", "VARCHAR",200);

checkOrCreate(tables[t],"UPDATED_ON", "DATE");

}

// notify the user what happened

JOptionPane.showMessageDialog(null, msg);

The end result of running this will be a dialog that shows all the actions performed.